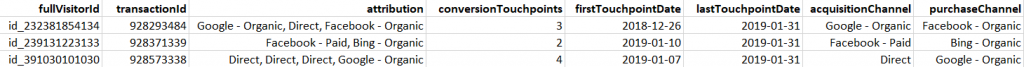

Are you ready to get you mind blown away? This R script is probably the best script in the world. Well maybe not, but it's definitely up there in the top. What the script will produce is the output below.

If you are currently using Google Analytics 360 and you have connected it to Google Big Query you can put a big smile on because you will be able to recreate this with your own data! If you're not using GA360 with BigQuery then you might be able to recreate it if you don't have a lot of daily users (to avoid sampling) and you are tracking parameters that can be used to define sessions, and users. I can strongly recommend Simo Ahava's solution that I always implement in GA to be able to split the visitors by session and client ID. How to use that instead of BigQuery in my script you will have to figure out for yourself though. Now we're gonna deep dive into the actual script.

Creating the data table with BigQuery

First off we need to get the data from GA360 in BigQuery into R. The best way to do this is to connect from R to BQ. We will also need dplyr later on so we're gonna import that library as well. Authorize the connection from BigQuery to R and then type your project ID and a dataset ID where you can store a new table.

library(bigQueryR)

library(googleCloudStorageR)

library(googleAuthR)

library(dplyr)

options(googleAuthR.scopes.selected =

c("https://www.googleapis.com/auth/bigquery",

"https://www.googleapis.com/auth/devstorage.full_control",

"https://www.googleapis.com/auth/devstorage.read_write",

"https://www.googleapis.com/auth/cloud-platform"))

bqr_auth()

bqr_global_project("YOUR-BQ-PROJECT-ID")

bqr_global_dataset("YOUR-BQ-DATASET-ID")

Next step is to write the query that we have to run in BigQuery. What we want to do is to create a table with the columns fullVisitorId, transactionId, date, visitStartTime and channel. The rest is going to be calculated in R. The channel grouping is up to you to decide. In my example I will only be showing some standard groupings. By the way, I am not a SQL wizard and if you think you have a better solution for writing this query I would appreciate if you would comment it below.

query <- "

SELECT

CONCAT('id_', CAST(fullvisitorId AS STRING)) AS fullVisitorId,

MAX(transactionId) AS transactionId,

date,

visitStartTime,

channel

FROM (

SELECT

fullVisitorId,

transactionId,

date,

visitStartTime,

CASE

WHEN sm LIKE '%google%cpc%' AND (adn != 'content' OR adn IS NULL) THEN 'Google - Paid'

WHEN sm LIKE '%bing%cpc%' THEN 'Bing - Paid'

WHEN sm LIKE 'google%organic' THEN 'Google - Organic'

WHEN sm LIKE 'bing%organic' THEN 'Bing - Organic'

ELSE 'Other'

END AS channel,

CASE

WHEN MAX(transactionId) OVER (PARTITION BY fullVisitorId) IS NOT NULL THEN 1

ELSE 0

END AS convertingCustomer

FROM (

SELECT

fullVisitorId,

date,

visitStartTime,

CONCAT(trafficSource.source, ' / ', trafficSource.medium) AS sm,

trafficSource.adwordsClickInfo.adNetworkType AS adn,

hit.transaction.transactionId AS transactionId

FROM

`YOUR-PROJECT-NAME.YOUR_DATASET_ID.ga_sessions_20*` ga, # Write your own IDs

UNNEST(ga.hits) hit

WHERE

parse_DATE('%y%m%d',

_TABLE_SUFFIX) BETWEEN DATE('2018-01-01')

AND DATE('2018-12-31')

GROUP BY

fullVisitorId,

date,

trafficSource.source,

trafficSource.medium,

visitStartTime,

trafficSource.adwordsClickInfo.adNetworkType,

hit.transaction.transactionId ))

WHERE

convertingCustomer = 1

GROUP BY

fullvisitorId,

date,

visitStartTime,

channel

ORDER BY

fullVisitorId,

visitStartTime

"

As you can see, we are storing the query in a variable called 'query'. This is only to make the script easier to understand and rewrite. If you want you can type it into the actual call to BigQuery. Make sure to replace the IDs in the script with your own and perhaps change the time period. I would recommend to run a shorter period when you're testing it out the first time.

Since we are working with a lot of data (or at least I am, but probably you as well if you're using GA360) we can't import the data from the query by using bqr_query(). Instead we have to create a new table in BigQuery with the data output from our query and then store the table data in a bucket.

bqr_query_asynch(query = query,

destinationTableId = "GA_MCF",

writeDisposition = "WRITE_TRUNCATE",

useLegacySql = FALSE)

job <- bqr_extract_data(projectId = "YOUR-PROJECT-ID",

datasetId = "YOUR_DATASET_ID",

tableId = "GA_MCF",

cloudStorageBucket = "YOUR_BUCKET_ID",

filename = "GA_MCF.csv",

fieldDelimiter = ",",

destinationFormat = "CSV")

bqr_wait_for_job(job)

Fill out your own IDs where needed (you might need to create a bucket in BigQuery if you don't have one already). Don't forget the last line! This makes sure that you don't try to read things that don't exist yet.

Getting the data into R and cleaning it

Now let's get the data into R! I haven't found a good way to import the data directly from the bucket so instead we will download the data into a CSV file and put it in the current R project folder. Then we can read the file with read.csv().

gcs_get_object("GA_MCF.csv", bucket = "YOUR_BUCKET_ID", saveToDisk = "GA_MCF.csv", overwrite = TRUE)

mcf <- read.csv("GA_MCF.csv")

Now we have to clean the data. We're gonna change the structure and then remove duplicated transaction rows (if there are any). If you're not sure why the subset function is taking NAs into consideration it's because we will get transactionId as NA for each session that did not contain a transaction. You can check the data before running this part by typing head(mcf, 20).

mcf$fullVisitorId <- as.character(mcf$fullVisitorId) mcf$transactionId <- as.character(mcf$transactionId) mcf$channel <- as.character(mcf$channel) mcf$date <- as.Date(as.character(mcf$date), '%Y%m%d') # REMOVE DUPLICATED TRANSACTIONS mcf <-mcf[order(mcf$fullVisitorId, mcf$visitStartTime),] mcf <- subset(mcf, !(duplicated(transactionId)) | is.na(transactionId))

We will also have to delete all rows that contain sessions that exist after the last conversion for the user. We need the journey to end with a conversion.

keys <- mcf[which(!(is.na(mcf$transactionId))),c('fullVisitorId','transactionId','visitStartTime')]

getLastTransaction <- function(keys){

df <- group_by(keys, fullVisitorId) %>%

summarise(lastTransaction = max(visitStartTime)) %>%

as.data.frame()

keys <- merge(keys, df, by = "fullVisitorId", all.x = TRUE)

return (keys[-3])

}

keys <- getLastTransaction(keys)

mcf <- merge(mcf, keys, by = "fullVisitorId", all.x = TRUE)

mcf <-mcf[order(mcf$fullVisitorId, mcf$visitStartTime),]

mcf <- subset(mcf, visitStartTime <= lastTransaction)

The rows check the maximum visitStartDate for each user's last conversion. Then we can use that information to create a subset of the original data.

Creating the attribution table

Now that we have the data structured and sorted in the way we need we can run the code for creating the multi channel funnel paths. The smartest way to do this is by grouping the data using dplyr. But in order to be able to group it we first have to add transactionId to all blank rows. We can do this by using the fill function in tidyr. Since the data is sorted correctly we can simply fill out the blank values with the previous existing one starting from the bottom.

mcf <- mcf %>% fill(transactionId, .direction = "up")

mcf <- group_by(mcf, fullVisitorId, transactionId) %>%

summarise(visitStartTime = min(visitStartTime),

firstTouchpointDate = min(date),

lastTouchpointDate = max(date),

multiChannelFunnel = paste(channel, collapse = ", "),

acquisitionChannel = channel[1],

conversionChannel = channel[length(channel)],

touchpoints = length(channel)) %>%

as.data.frame()

mcf$conversionLagDays <- as.numeric(mcf$lastTouchpointDate - mcf$firstTouchpointDate, units = "days")

Now you have a data frame where each row represents a customer journey from first entrance on the site to the purchase. Notice that after a purchase the user will start a new journey. Make sure you save the file in order to use the data in other systems if you need to or to access it quickly. I would recommend BigQuery but you could just as easily save it as a CSV file.

bqr_create_table(tableId = "GA_MCF_Calculations", template_data = mcf) bqr_upload_data(upload_data = mcf, tableId = "GA_MCF_Calculations", overwrite = TRUE) write.csv(mcf, "GA_MCF_Calculations.csv", row.names = FALSE)

I hope you find this useful and feel free to ask any questions you might have in the comments below. And as usual you can find the entire script on GitHub.

Comments